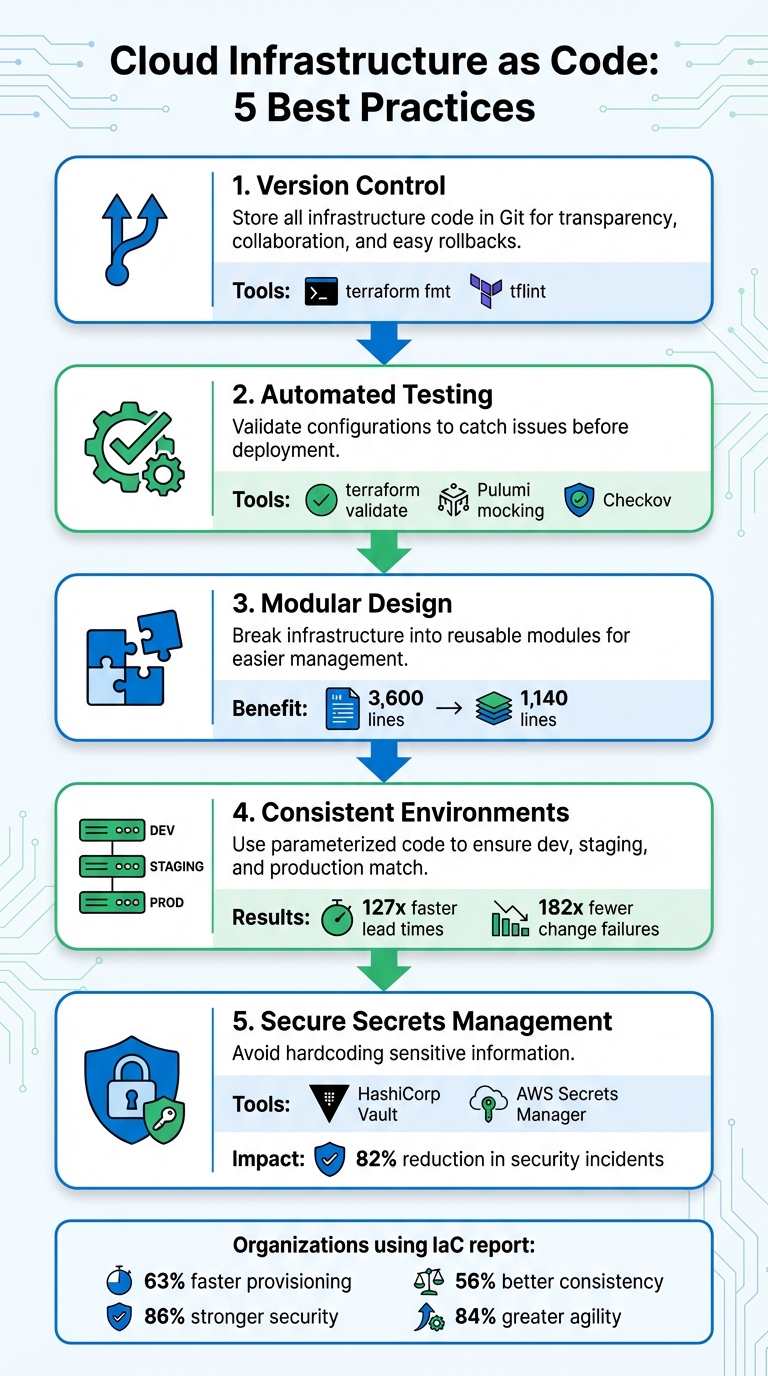

Cloud Infrastructure as Code: 5 Best Practices

- Version Control: Store all your infrastructure code in Git for transparency, collaboration, and easy rollbacks. Use tools like

terraform fmtandtflintto catch errors early. - Automated Testing: Validate configurations with tools like

terraform validateor Pulumi’s mocking features to catch issues before deployment. - Modular Design: Break infrastructure into reusable modules for easier management and scalability.

- Consistent Environments: Use parameterized code and remote backends to ensure development, staging, and production match.

- Secure Secrets Management: Avoid hardcoding sensitive information by using tools like HashiCorp Vault or AWS Secrets Manager.

These practices ensure reliable, scalable, and secure cloud deployments while streamlining workflows. Whether you're using Terraform, Pulumi, or advanced platforms like Kanu AI, these principles will help your team deploy faster and more confidently.

5 Best Practices for Cloud Infrastructure as Code Implementation

Infrastructure as Software Best Practices | Cloud Engineering Days 2022

sbb-itb-3b7b063

1. Use Version Control for All Infrastructure Code

Store all your infrastructure code in Git to establish a single, reliable source of truth. Every change - whether it’s adding a VPC or tweaking a database - gets tracked with details like timestamps, authors, and commit messages. This creates a comprehensive audit trail, so you’ll always know who made what change and when. It eliminates guesswork and ensures transparency. Let’s dive into how version control boosts collaboration, automation, scalability, and security.

Improves Collaboration and Reduces Errors

Version control doesn’t just track changes - it also enhances teamwork. With a pull request workflow, every change undergoes peer review before deployment. This process allows team members to suggest improvements, flag potential issues, and refine the code collaboratively.

To avoid simple mistakes, pre-commit hooks with tools like terraform fmt and tflint can catch syntax errors and formatting issues before the code even gets committed. For teams sharing infrastructure, using remote backends (e.g., S3 with DynamoDB) for state locking prevents multiple users from making conflicting updates that could corrupt the infrastructure state.

Supports Automation and CI/CD Integration

Version control plays a key role in enabling automated pipelines. Once you commit code to a repository, CI/CD tools can kick off validation checks, security scans (using tools like checkov), and enforce policies as code - all before provisioning any resources.

This approach shifts problem detection to earlier stages, saving time and reducing risks. As ARPHost explains:

The foundational principle of any robust infrastructure as code (IaC) strategy is treating infrastructure definitions with the same rigor as application code.

Promotes Scalability and Maintainability

By breaking your infrastructure into smaller, reusable modules and storing them in version control, you can build a library of tested and approved patterns. Instead of duplicating code across projects, teams can reference specific tagged versions of modules, such as v1.2.0, for common elements like networking or databases.

This modular approach simplifies bug fixes and rollbacks. If something goes wrong, you can quickly pinpoint the issue and revert to a previous version, minimizing downtime.

Strengthens Security and Compliance

Version control also bolsters security and helps meet compliance requirements. An auditable record of every change is invaluable for regulatory purposes. Using secret managers like HashiCorp Vault ensures credentials aren’t hardcoded, maintaining traceability while protecting sensitive data.

Additionally, a .gitignore file is essential for keeping sensitive information - such as API keys, local state files (.tfstate), and other private data - out of your repository. This simple step goes a long way in safeguarding your infrastructure.

2. Test Infrastructure Configurations Automatically

Automated testing plays a crucial role in catching infrastructure issues early, which helps avoid expensive fixes in production. When paired with version-controlled code, it ensures that every change is thoroughly validated. A well-thought-out testing strategy that balances speed, cost, and coverage is key to identifying problems before deployment.

Improves Collaboration and Reduces Errors

Static analysis tools like terraform validate, tflint, and Checkov are excellent for catching syntax errors, formatting issues, and security misconfigurations within seconds - without deploying anything. Infrastructure engineer Mattias Fjellström emphasizes:

A good rule of thumb is that your deployment pipeline should never fail on the terraform validate command. You should catch these errors during development.

For more in-depth checks, unit tests can validate module logic without incurring cloud usage costs. Tools like Terraform 1.6’s native testing framework (terraform test) allow you to write declarative tests in HCL. Pulumi, on the other hand, offers mocking capabilities for testing infrastructure logic in languages like Python or Go without making real cloud calls. These testing methods create a strong foundation for integrating continuous integration/continuous deployment (CI/CD) processes.

Supports Automation and CI/CD Integration

A pyramid testing approach works well: start with fast static analysis, then move to unit, integration, and end-to-end tests. This "fail-fast" method halts the pipeline when inexpensive tests fail, saving time and resources by avoiding unnecessary integration tests.

To strengthen security and compliance, combine automated tests with policy-as-code tools like Open Policy Agent (OPA) or HashiCorp Sentinel. These tools enforce rules - like blocking public S3 buckets - before deployment. Additionally, commenting terraform plan outputs on pull requests makes it easier for reviewers to verify changes.

Promotes Scalability and Maintainability

Automated tests provide confidence for safe refactoring, letting engineers update complex infrastructure without worrying about breaking existing services. By breaking infrastructure into smaller, reusable modules, automated testing ensures consistency and reduces the risk of configuration drift as your environment grows.

For integration testing, ephemeral environments and unique resource naming are effective strategies to avoid conflicts while managing costs.

Strengthens Security and Compliance

Automated testing helps "shift security left", catching vulnerabilities during development instead of after deployment. Ioannis Moustakis, a Solutions Architect at AWS, explains:

Testing IaC proposes a fundamental shift left... catching and blocking errors before they reach production environments, where fixes cost exponentially more.

Regular drift detection checks - scheduled daily or weekly - can highlight when manual changes cause infrastructure to deviate from your code. Terraform 1.5 introduced check blocks, enabling continuous validation of infrastructure health outside the typical resource lifecycle. This ensures your environment stays aligned with your version-controlled source of truth.

3. Build Modular and Reusable Infrastructure Components

Breaking your infrastructure code into smaller, focused modules - like VPCs, IAM roles, or Kubernetes node pools - makes managing and testing your setup much easier. This modular approach organizes infrastructure into logical units that you can version, test, and share across multiple projects.

Promotes Scalability and Maintainability

Using modular infrastructure aligns with the DRY (Don't Repeat Yourself) principle, which eliminates redundant code. When you update a single module, those updates automatically apply across all environments. This not only saves time but also reduces operational overhead and technical debt. For instance, in multi-environment setups, modular components can significantly reduce configuration code, cutting it from 3,600 lines to just 1,140. Additionally, isolating state minimizes deployment failures' impact and avoids locking issues when multiple teams work simultaneously. Gabriel Thompson, a technical writer on IaC, puts it this way:

The real promise is not reuse for its own sake. It is the ability to describe infrastructure in terms of architecture instead of individual resources.

Improves Collaboration and Reduces Errors

Modular design also fosters better teamwork. By using clear variable and output contracts, modules create interfaces that reduce the chances of unintended changes. This approach simplifies collaboration and minimizes errors. HashiCorp emphasizes the importance of keeping modules simple:

Modules should be opinionated and designed to do one thing well. If a module's function or purpose is hard to explain, the module is probably too complex.

Standardizing the module structure with files like main.tf, variables.tf, outputs.tf, and versions.tf improves code readability and makes onboarding new team members faster. Adopting semantic versioning (MAJOR.MINOR.PATCH) helps signal breaking changes and allows teams to pin modules to stable versions (e.g., ~> 1.2). Adding input validation with type constraints and custom validation blocks ensures configuration errors are caught early during the planning phase instead of runtime.

Supports Automation and CI/CD Integration

Modular components work seamlessly with automated pipelines. Smaller, focused modules are easier to validate using tools like Terratest or TFLint. Pinning module versions in CI/CD pipelines ensures consistent deployments and protects against unexpected upstream changes.

Separating high-volatility resources (like application servers) from low-volatility ones (like databases) allows CI/CD pipelines to handle frequent application updates without disrupting stable infrastructure. As HashiCorp explains:

Terraform modules are self-contained pieces of infrastructure-as-code that abstract the underlying complexity of infrastructure deployments. They speed adoption and lower the barrier of entry for Terraform end users.

This modular approach not only supports automation but also strengthens security practices.

Strengthens Security and Compliance

Modules can enforce privilege boundaries by grouping resources, which enhances security and compliance. This segregation of duties ensures that security is managed at the component level. Modules can also enforce compliance standards by setting constraints - like preventing public subnets - before provisioning resources.

When combined with policy-as-code tools like Open Policy Agent (OPA) or HashiCorp Sentinel, modular infrastructure allows for automatic validation of module inputs and enforcement of security policies. Using private or public Terraform registries further bolsters security by providing a centralized, versioned repository where teams can access verified infrastructure blueprints that have already passed security reviews.

4. Maintain Consistent Environments Across Development Stages

Keeping development, staging, and production environments consistent is crucial to avoiding deployment failures. Even with solid version control and modular code, discrepancies between environments - caused by manual adjustments or undocumented changes - can lead to code passing all tests but still failing in production. Treating this uniformity as a core principle ensures smooth and predictable releases.

This consistency doesn’t just simplify deployments - it also lays the groundwork for effective automation and testing.

Supports Automation and CI/CD Integration

A consistent environment is the backbone of reliable automation. High-performing DevOps teams that prioritize this consistency see impressive results: 127 times faster lead times from code commit to production and 182 times fewer change failures. In contrast, mismatched environments can cause false positives during testing or let production defects slip through.

One way to maintain consistency is by parameterizing infrastructure code to manage environment-specific differences. For example, instead of duplicating configurations, store details like instance sizes, regions, or CIDR blocks in variable files (e.g., dev.tfvars, prod.tfvars). This approach allows CI/CD pipelines to automatically spin up temporary test environments for pull request validation - and clean them up afterward.

Promotes Scalability and Maintainability

Consistency also helps tackle technical debt by preventing configuration drift - the gradual buildup of manual changes that can destabilize infrastructure. As Bryan Krausen aptly puts it:

"Maintainability isn't just a buzzword; it's your shield against drift, a powerful reducer of production outages, and a key driver for lower operational costs."

To keep configurations predictable, use remote state backends with locking to safeguard state integrity. Tools like terraform fmt and TFLint can enforce formatting and coding standards through pre-commit hooks, ensuring a uniform codebase across all stages.

Strengthens Security and Compliance

Separate environments using distinct accounts or state files to limit the impact of potential security breaches. Organizations that adopt advanced Infrastructure as Code (IaC) practices report 86% stronger security and 84% greater agility in provisioning infrastructure. Consistent configurations ensure that security policies, IAM roles, and compliance rules are applied uniformly. Automated tools like tfsec or Checkov can identify misconfigurations early in the development process.

Policy-as-code tools, such as Open Policy Agent (OPA), can further enhance security by automatically verifying that infrastructure changes align with established standards before deployment. This automation prevents non-compliant resources - like unencrypted storage buckets - from reaching production, turning compliance into a built-in safeguard rather than a manual process. By maintaining uniform environments, teams can achieve reliability, security, and scalability as part of their IaC practices.

5. Manage Secrets Securely and Enforce Policies

Storing secrets directly in your Infrastructure as Code (IaC) files - like API keys, tokens, or passwords - is a major security risk. If these secrets are hardcoded into .tf files or committed to version control systems, they can expose critical parts of your infrastructure, including databases and cloud accounts. Dillon Watts from Doppler highlights this danger:

"Hardcoded secrets in Terraform represent one of the most common and dangerous security practices in modern infrastructure management."

Even one leaked secret can jeopardize your entire infrastructure, affecting areas like networking and identity management.

Strengthens Security and Compliance

To mitigate these risks, use dedicated secrets management tools such as HashiCorp Vault, AWS Secrets Manager, or Google Secret Manager. These tools allow you to fetch credentials dynamically at runtime, ensuring sensitive data isn’t stored in state files. Terraform 1.10 also offers ephemeral resources to securely handle secrets during the plan and apply phases.

The scale of the issue is significant. In 2023 alone, public GitHub repositories saw 6 million new secrets leaked, many embedded in IaC files. To combat this, policy-as-code tools like Open Policy Agent (OPA) and Checkov can enforce security rules automatically. For example, they can block deployments of publicly accessible S3 buckets or unencrypted databases before they reach production. Organizations that adopt these automated guardrails report an 82% reduction in cloud misconfiguration-related security incidents.

By integrating these practices, you can align with CI/CD workflows while automating sensitive credential management.

Supports Automation and CI/CD Integration

Beyond securing secrets, automation tools help ensure these credentials never appear in your codebase.

For instance, AWS Secrets Manager can automate secret rotation, ensuring credentials are short-lived and reducing potential attack surfaces. Dynamic secrets with limited lifespans add an extra layer of protection. You can also pass sensitive values into Terraform at runtime using environment variables with the TF_VAR_ prefix, which avoids writing them to disk.

To catch hardcoded secrets early, integrate scanning tools like GitGuardian or TruffleHog into your CI/CD pipeline. These tools can act as pre-commit hooks, preventing secrets from ever reaching your repository. Additionally, marking variables as sensitive (with the sensitive = true attribute) ensures they remain hidden in CLI outputs and logs. Finally, store your state files in secure remote backends like S3 or Google Cloud Storage, applying encryption and strict access controls.

Conclusion

To achieve dependable and scalable cloud deployments, focus on the practices outlined above. These steps can turn manual infrastructure management into an automated, repeatable process. Version control provides an auditable history and enables quick rollbacks when issues arise. Automated testing catches configuration errors before they hit production. Modular components allow you to construct large-scale systems using trusted, reusable elements. Consistent environments eliminate the dreaded "it works in dev but not in production" scenarios. Lastly, secure secrets management and policy enforcement ensure that security measures scale seamlessly with your infrastructure.

Consider this: organizations leveraging Infrastructure as Code report 63% faster provisioning and 56% better consistency. Experts agree that Infrastructure as Code is about creating systems that reliably manage cloud resources. These benefits are built on the foundation of version control, automated testing, modular design, consistent environments, and secure secrets management. Together, these practices enable faster deployments while maintaining scalability and agility.

Treat your infrastructure with the same level of care as your application code. Tools like Kanu AI can simplify this process by automating code generation, performing extensive validation checks, and offering actionable insights. With features such as AI-driven diagnostics, pull request generation, and SOC 2 compliance, Kanu helps you implement these best practices while keeping security and control intact.

Start adopting these approaches today to boost reliability, speed, and team confidence. By integrating these workflows, your team can drive continuous innovation without sacrificing security or stability.

FAQs

What should I store in Git vs keep out of my IaC repo?

Store all the infrastructure code that defines your cloud resources - like Terraform configuration files, modules, and scripts - directly in your Git repository. This approach allows for version control, tracking changes, and team collaboration on your infrastructure setup.

However, make sure to keep sensitive information, such as secrets, passwords, API keys, and credentials, out of the repository. Instead, rely on secure storage solutions like secret management tools or environment variables to safeguard this data and uphold security standards.

How do I test IaC changes without deploying real cloud resources?

You can test Infrastructure as Code (IaC) changes using tools designed to simulate environments or run tests locally. For instance, frameworks like Terraform include support for unit and validation testing, ensuring your configurations are working as intended.

To catch issues early in the development process, static analysis tools such as tflint and checkov are invaluable. They help identify errors in your code before deployment. For more advanced testing, tools like Terratest allow you to create automated tests that can be integrated into CI pipelines, streamlining the testing process.

These approaches let you validate configurations without deploying actual resources, which helps to minimize both risks and costs.

How can I prevent configuration drift between dev, staging, and prod?

Preventing configuration drift is crucial for maintaining consistent infrastructure across environments. Using Infrastructure as Code (IaC) tools like Terraform or Pulumi helps ensure infrastructure remains consistent and predictable through idempotency. These tools allow you to define your infrastructure in code, making it easier to manage and replicate.

To detect drift, implement monitoring strategies that identify discrepancies between your intended configuration and the actual state. Organize your environments by using separate configuration files, which can simplify management and reduce overlap.

Promote changes through version control systems and CI/CD pipelines to maintain a clear history of updates and automate deployments. Automating configuration management and enforcing policies further minimizes manual errors, ensuring uniformity across development, staging, and production environments. This approach builds a more reliable and scalable infrastructure.